The 4 Phases of Enterprise AI Adoption (and Why Security Must Catch Up)

The 4 Phases of Enterprise AI Adoption...

(and Why Security Must Catch Up)

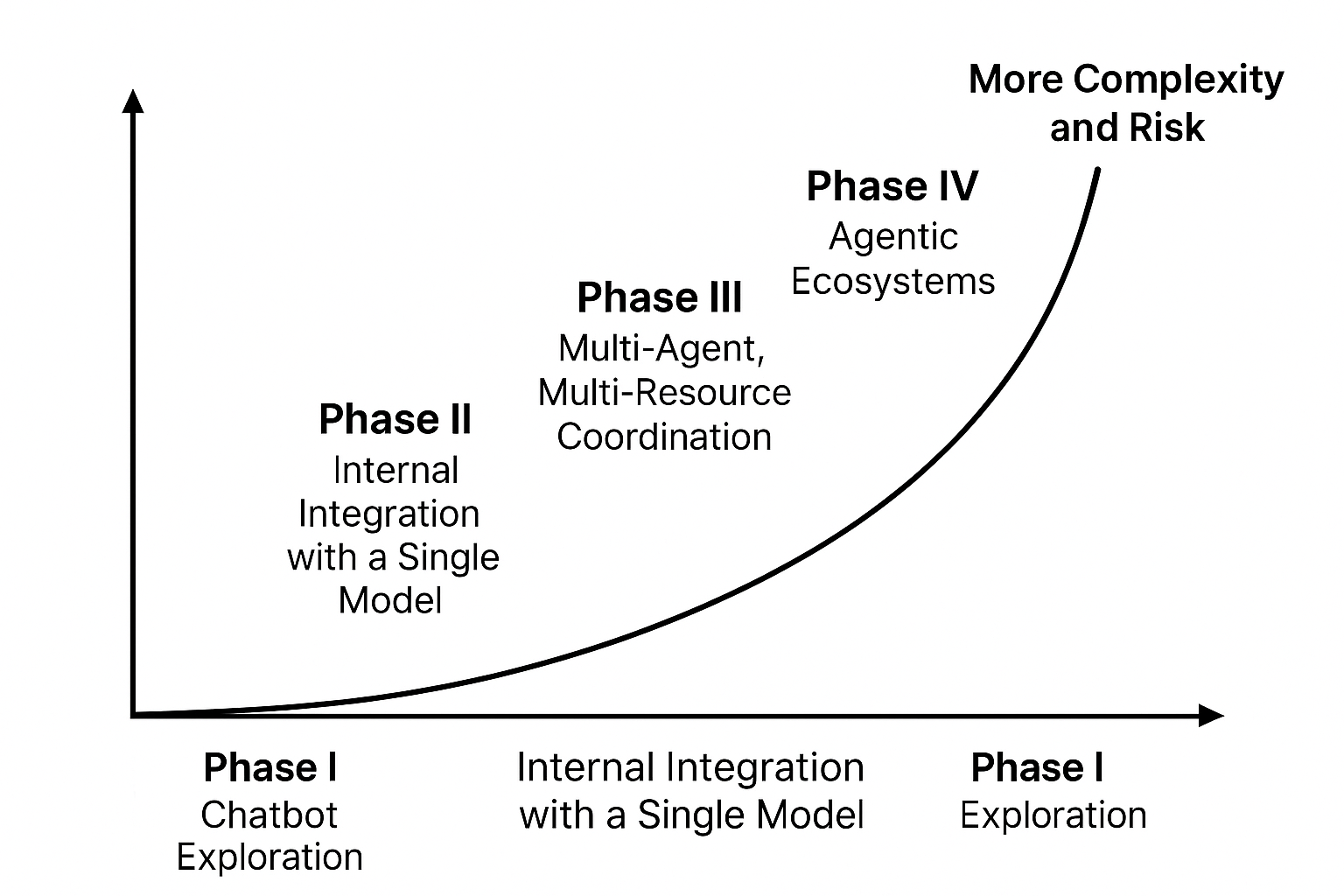

As enterprises rush to adopt AI, most start with safe, isolated experiments. But real transformation happens when AI becomes deeply embedded in systems, processes, and decision-making. At that point, traditional security breaks down.

Drawing from our experience securing critical infrastructure with Named Data Networking (NDN), we’ve mapped out what we see as the four phases of enterprise AI adoption and how Zero Trust needs to evolve at each step.

Phase I: Chatbot Exploration

In this first phase, enterprises typically experiment with generative AI by integrating basic chat interfaces. These might be customer-facing bots or internal productivity assistants for HR, IT support, or general Q&A. The tools used are often SaaS-based platforms like ChatGPT or Copilot, accessed through APIs or front-end apps.

The implementations are lightweight, with little or no access to internal systems or sensitive data. These deployments are easy to spin up and provide a quick demonstration of AI’s potential value.

Security/Privacy Risk: Low to moderate - Data stays in the cloud - Limited internal integration - High risk of shadow AI use or data leakage if not monitored

Zero Trust Consideration: - Endpoint and identity hygiene - Basic visibility into usage - SaaS vendor security due diligence

Reality: This is where many enterprises are today. Useful, low-friction, but not transformative.

Phase II: Internal Integration with a Single Model

As confidence in AI grows, many enterprises take the next step by integrating a single large language model (LLM) with internal data. This often involves retrieval-augmented generation (RAG), where the model pulls from internal knowledge bases, file shares, or documentation repositories to generate responses.

Deployments move to private cloud or on-prem environments for better control. This is the first time AI starts to touch sensitive or proprietary information, requiring enterprises to rethink how data access is managed and monitored.

Security/Privacy Risk: High - Sensitive data access (e.g., contracts, customer records) - Model may act on behalf of users

Zero Trust Consideration: - Access control must extend to model prompts and outputs - Logging and auditing become critical - Authentication for model-to-data connections

Reality: This is the moment when AI stops being just a productivity booster and starts to impact real systems.

Phase III: Multi-Agent, Multi-Resource Coordination

By Phase III, AI becomes a more integral part of enterprise workflows. Multiple models or agents begin to coordinate with each other, interacting across business systems such as CRMs, ERPs, and databases. Agents can generate reports, take actions, and delegate tasks, often without human initiation.

This phase introduces tremendous efficiency but also increased risk. The security model must now account for dynamic, inter-agent communication, where traditional tools like IAM or static ACLs start to falter. This is where most Zero Trust implementations begin to fall apart.

Security/Privacy Risk: Very High - Data flows across boundaries - Agents may act without human supervision - Traditional IAM can’t model dynamic trust relationships

Zero Trust Consideration: - Identity must shift from user-based to agent-based - Control must follow the data, not the perimeter - Policy must travel with each piece of data

Reality: Most security models start to break here. Traditional Zero Trust tools don’t scale to dynamic, machine-to-machine contexts.

Phase IV: Agentic Ecosystems

In the final phase, AI agents gain true autonomy. They interact not just within an organization, but across organizational boundaries. Agents may collaborate, make decisions, and act on behalf of humans or other systems. This is the beginning of agentic ecosystems.

Here, AI is no longer a tool, it becomes an actor. Trust must be established between machines, across domains, and in real-time. Without a foundational shift in security architecture, visibility and control can collapse entirely.

Security/Privacy Risk: Critical - Complete loss of visibility without architectural changes - Attack surface becomes machine-native

Zero Trust Consideration: - Identity must be cryptographically verifiable - Trust must be embedded in the data layer - Architecture must support decentralization, verification, and fine-grained policy

Reality: Few organizations are here yet—but it’s coming faster than expected.

What Needs to Change

Traditional Zero Trust assumes a user, a device, and a perimeter. That works in Phase I and maybe Phase II. But for AI in Phase III and IV, security must shift:

- From network-based to data-centric

- From user identity to machine-native identity

- From policy at the edge to policy within the data

At Operant, we’re building for this future.

Our Multi-Part Trust (MPT) platform, powered by Named Data Networking (NDN) enables: - Named identities for agents and data - Policy enforcement inside the control plane - Secure, autonomous agent-to-agent communication

For more information about Operant Networks click the link below